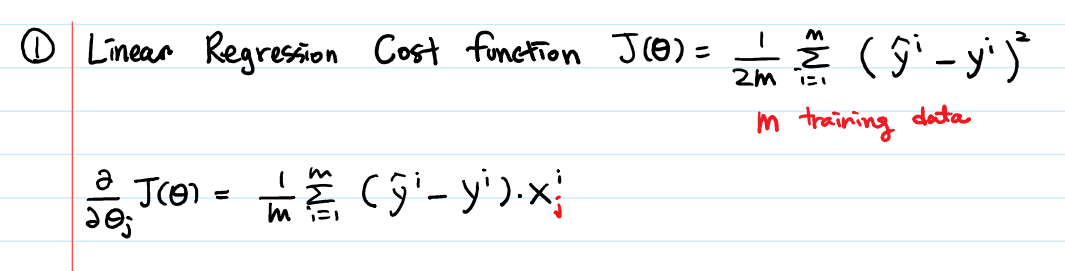

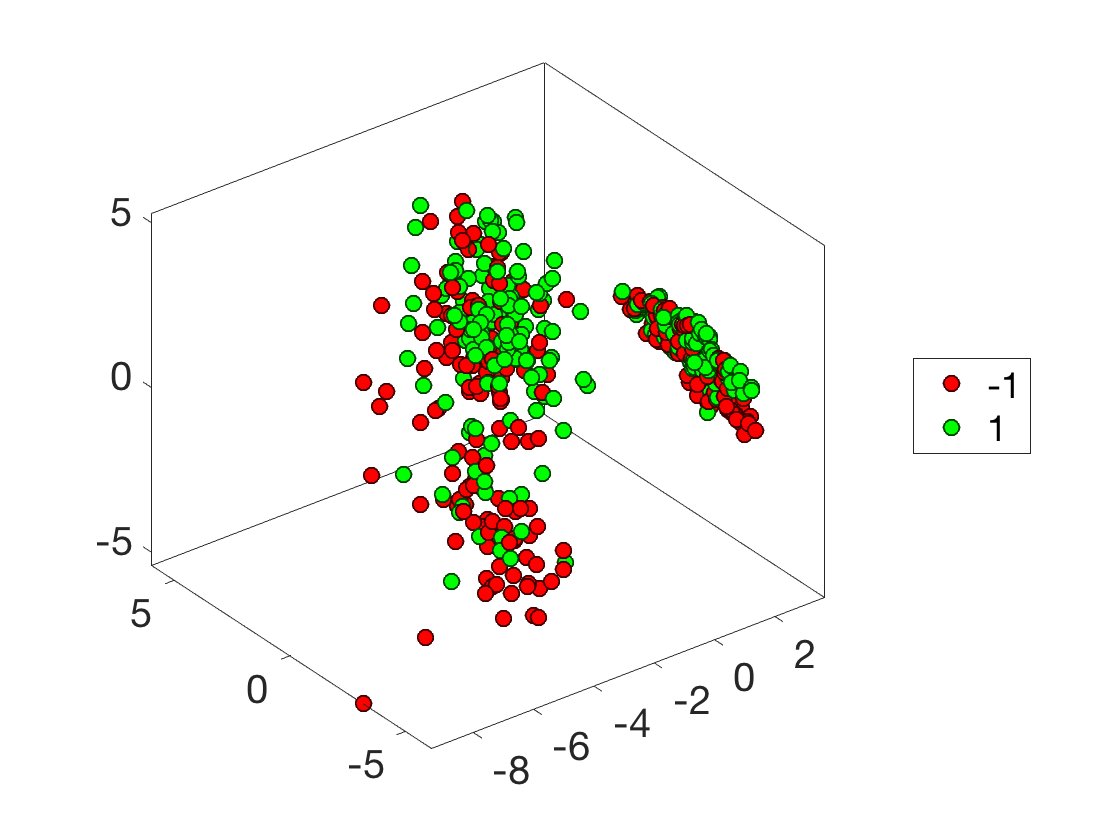

Our empirical studies with standard deep learning model-architectures and datasets shows that our method not only improves generalization performance in large-batch training, but furthermore, does so in a way where the optimization performance remains desirable and the training duration is not elongated. Ans: Mini-batch gradient descent is an extension of the gradient descent algorithm. Moreover, over the convex-quadratic, we prove in theory that it can be characterized by the Frobenius norm of the noise matrix. We demonstrate that the learning performance of our method is more accurately captured by the structure of the covariance matrix of the noise rather than by the variance of gradients. One batch is referred to as one iteration of the algorithm, and this form is known as batch gradient descent. The cost function is computed over the entire training dataset for every iteration. To address the problem of improving generalization while maintaining optimal convergence in large-batch training, we propose to add covariance noise to the gradients. Batch gradient descent is one of the most used variants of the gradient descent algorithm.

Increasing the batch-size used typically improves optimization but degrades generalization. %X The choice of batch-size in a stochastic optimization algorithm plays a substantial role for both optimization and generalization. %C Proceedings of Machine Learning Research %B Proceedings of the Twenty Third International Conference on Artificial Intelligence and Statistics %T An Empirical Study of Stochastic Gradient Descent with Structured Covariance Noise Our empirical studies with standard deep learning model-architectures and datasets shows that our method not only improves generalization performance in large-batch training, but furthermore, does so in a way where the optimization performance remains desirable and the training duration is not elongated.Ĭite this = We demonstrate that the learning performance of our method is more accurately captured by the structure of the covariance matrix of the noise rather than by the variance of gradients. The practical performance of stochastic gradient descent on large-scale machine learning tasks is often much better than what current theoretical tools can. To address the problem of improving generalization while maintaining optimal convergence in large-batch training, we propose to add covariance noise to the gradients.

#Batch gradient descent how to#

Increasing the batch-size used typically improves optimization but degrades generalization. How to implement mini-batch gradient descent in Tensorflow 2 kerastensorflowtensorflow-datasetspythonnumpy TensorFlow: Example implementation of a simple. It is about implementation of Gradient Descent From Scratch in Python.The choice of batch-size in a stochastic optimization algorithm plays a substantial role for both optimization and generalization. As you do a complete batch pass over your data X, you need to reduce the. If you want to understand the fundamentals of Gradient Descent, or the basics of Gradient Descent Algorithm, you like to watch below video. You need to take care about the intuition of the regression using gradient descent. This uses a single data point at a time, it does not matter how many data points we have. With todays availability of very large image/audio/text-based datasets, stochastic and mini-batch gradient descent have become an integral part of modern. Stochastic Gradient Descent calculates the loss and updates the model for each data point in the training dataset.

#Batch gradient descent update#

These batches are used to calculate model loss and update model coefficients.įor example if we have one thousand data points, then we create 10 batches of 100 data points each. Mini batch Gradient Descent splits the training dataset into small batches. It calculates the loss for each data point in the training dataset, but updates the model weight after all training data points have been evaluated.įor example if we have one thousand data points, then model’s weight update will happen after all the one thousand data points are evaluated. There are mainly three types of Gradient Descent algorithmīatch Gradient Descent uses the entire dataset together to update the model weight. Gradient Descent is one of the optimization algorithm, that is used to minimize the loss. Yeming Wen, Kevin Luk, Maxime Gazeau, Guodong Zhang, Harris Chan, Jimmy Ba.

While training machine learning model, we require an algorithm to minimize the value of loss function. An Empirical Study of Stochastic Gradient Descent with Structured Covariance Noise. Gradient Descent is one the key algorithm used in Machine Learning.